Two weeks ago I posted an article about improving fwrite‘s performance. fwrite is normally used to store binary data in some custom pre-defined format. But we often don’t need or want to use such low-level functions. Matlab’s built-in save function is an easy and very convenient way to store data in both binary and text formats. This data can later be loaded back into Matlab using the load function. Today’s article will show little-known tricks of improving save‘s performance.

MAT is Matlab’s default data format for the save function. This format is publicly available and adaptors are available for other programming languages (C, C#, Java). Matlab 6 and earlier did not employ automatic data compression; Matlab versions 7.0 (R14) through 7.2 (R2006a) use GZIP compression; Matlab 7.3 (R2006b) and newer can use an HDF5-variant format, which apparently also uses GZIP (level-3) compression, although MathWorks might have done better to pay the license cost of employing SZIP (thanks to Malcolm Lidierth for the clarification). Note that Matlab’s 7.3 format is not a pure HDF5 file, but rather a HDF5 variant that uses an undocumented internal format.

The following table summarizes the available options for saving data using the save function:

| save option | Available since | Data format | Compression | Major functionality |

|---|---|---|---|---|

-v7.3 |

R2006b (7.3) | Binary (HDF5) | GZIP | 2GB files, class objects |

-v7 |

R14 (7.0) | Binary (MAT) | GZIP | Compression, Unicode |

-v6 |

R8 (5.0) | Binary (MAT) | None | N-D arrays, cell arrays, structs |

-v4 |

All releases | Binary (MAT) | None | 2D data |

-ascii |

All releases | Text | None | Tab/space delimited |

HDF5 uses a generic format to store data of any conceivable type, and has a non-significant storage overhead in order to describe the file’s contents. Moreover, Matlab’s HDF5 implementation does not by default compress non-numeric data (struct and cell arrays). For this reason, HDF5 files are typically larger and slower than non-HDF5 MAT files, especially if the data contains cell arrays or structs. This holds true for both pure-HDF files (saved via the hdf and hdf5 set of functions, for HDF4 and HDF5 formats respectively), and v7.3-format MAT files.

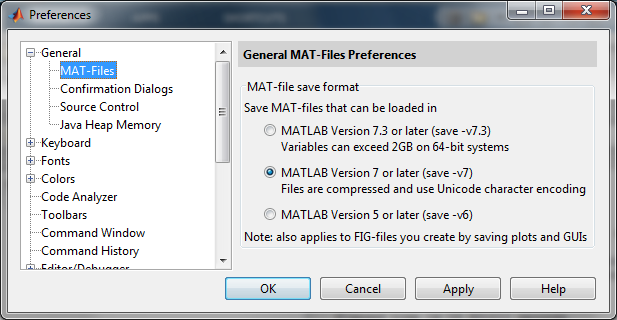

Perhaps for this reason the default preference is for save to use –v7, even on new releases that support –v7.3. This preference can be changed in Matlab’s Preferences/General window (or we could always specify the –v7/-v7.3 switch directly when using save):

Over the years, MathWorks has fixed several inefficiencies when reading HDF5 files (ref1, ref2). Some of these fixes include patches for older releases, and readers are advised to download and install the appropriate patches if you do not use the latest Matlab release (currently R2013a). There are still a couple of open bugs regarding HDF5 performance and compression that may affect save.

One might think that due to the generic descriptive file header and the increased I/O, as well as the open bug, the -v7.3 (HDF5) format would always be slower than –v7 (MAT) format in save and load. This is indeed often the case, but not always:

A = randi(20,1000,1200,40,'int32'); % 48M int32s => 184 MB B = randn(500,1000,20); % 80M doubles => 78 MB ops.algo = 'test'; % non-numeric tic, save('test1.mat','-v7','ops','A','B'); toc % => Elapsed time is 11.940455 seconds. % file size: 114 MB tic, save('test2.mat','-v7.3','ops','A','B'); toc % => Elapsed time is 6.963135 seconds. % file size: 116 MB |

In this case, the HDF5 format was much faster than MAT, offsetting the benefits of the MAT’s reduced I/O. This example shows that we need to check our specific application’s data files on a case-by-case basis. For some files –v7 may be better; for others –v7.3 is best. The widely-accepted conventional wisdom of only using the new –v7.3 format for enormous (>2GB) files is inappropriate. In general, if the data contains many non-numeric elements, the resulting –v7.3 HDF5 file is much larger and slower than the –v7 MAT file, while if the data is mostly numeric, then –v7.3 would be faster and comparable in size.

Surprisingly, we can often sacrifice compression to (paradoxically) achieve better performance, for both save and load, at the expense of much larger file size. This is done by saving the numeric data in uncompressed HDF5 format, using the savefast utility on the Matlab File Exchange, using the same syntax as save:

tic, savefast('test3.mat','ops','A','B'); toc % => Elapsed time is 3.164903 seconds. % file size: 259 MB |

Even better performance, and similar or somewhat lower file size, can be achieved by using save’s uncompressed format –v6. The –v6 format is consistently faster than both –v7 and –v7.3, at the expense of a larger file size. However, save –v6 cannot save Unicode and class objects, and is limited to <2GB file sizes.

Another lesson here is that depending on the relative size of the numeric and non-numeric data being saved, different data format may be advisable. As the application evolves and the saved data’s size and mixture change, we might need to revisit the format decision. Here is a summary on one specific computer, using the numeric variable A (184 MB) above, together with a cell-array of varying size:

B = num2cell(randn(1,dataSize)); % dataSize = 1e3, 1e4, 1e5, 1e6 |

| Numeric data | Non-numeric data | save -v7.3 | save -v7 | save -v6 | savefast |

|---|---|---|---|---|---|

| 184 MB | 0.114 MB | 3.8 secs, 43 MB | 9.3 secs, 40 MB | 1.6 secs, 183 MB | 2.1 secs, 183 MB |

| 184 MB | 1.14 MB | 4.3 secs, 46 MB | 9.5 secs, 40 MB | 1.6 secs, 184 MB | 2.1 secs, 186 MB |

| 184 MB | 11.4 MB | 12.6 secs, 78 MB | 9.9 secs, 41 MB | 2.7 secs, 189 MB | 11.1 secs, 219 MB |

| 184 MB | 114 MB | 87.5 secs, 402 MB | 13.9 secs, 50 MB | 5.8 secs, 244 MB | 85.2 secs, 544 MB |

As noted, and as can be clearly seen in the table, compression is not enabled for non-numeric data in the save –v7.3 (HDF5) option and savefast. However, we can implement our own save variant that does compress, by using low-level HDF5 primitives in m-code or mex c-code.

A general conclusion that can be drawn from all this is that in the specific case of save, the additional time for compression is often NOT offset by the reduced I/O. So the general rule is to use –v6 whenever possible.

Although save –v6 does not compress its data, it does store data in a more compact manner than Matlab memory. So, while our test set’s cell array held 114 MB of Matlab memory, on disk it was only stored within 55 MB (= 244 MB – 189 MB).

The performance of saving non-numeric data can be dramatically improved (and the file size reduced correspondingly) by manually serializing the data into a series of uint8 bytes that can easily be saved very compactly. When loading the files, we would simply deserialize the loaded data. I recommend using Christian Kothe’s excellent Fast serialize/deserialize utility on Matlab’s File Exchange. The huge gain in performance and file size when using serialized data is absolutely amazing (esp. for data types that -v6 cannot save), for all the save alternatives:

B = num2cell(randn(1,1e6)); % 1M cell array, 114 MB in Matlab memory B_ser = hlp_serialize(B); |

| Saved variable | Matlab memory | save -v7.3 | save -v7 | save -v6 | savefast |

|---|---|---|---|---|---|

| B | 114 MB | 83 secs, 361 MB | 4.5 secs, 9.2 MB | 3.5 secs, 61 MB | 83 secs, 361 MB |

| B_ser | 7.6 MB | 1.21 secs, 7.4 MB | 1.17 secs, 7.4 MB | 0.93 secs, 7.6 MB | 0.94 secs, 7.6 MB |

Serializing data in this manner enables save –v6 to be used even for Unicode and class objects (that would otherwise require –v7), as well as huge data (that would otherwise require >2GB files, and usage of –v7.3). One user has reported that the run-time for saving a 2.5GB cell-array of structs was reduced from hours to a single minute using serialization (despite the fact that he was using a non-optimized serialization, not Christian’s faster utility). In addition to the performance benefits, saving class objects in this manner avoids a memory leak bug that occurs when saving objects to MAT files on Matlab releases R2011b-R2012b (7.13-8.0).

When the data is purely numeric, we could use hdf5write or h5create + h5write, in addition to save and savefast (see related). Note that hdf5write will be phased out in a future Matlab release; MathWorks advises to use h5create + h5write instead. Here are the corresponding results for 184 MB of numeric data on a standard 5400 RPM hard disk and an SSD:

| hdfwrite | h5create + h5write (Deflate=0) | h5create + h5write (Deflate=1) | save -v7.3 | save -v7 | save -v6 | savefast | |

|---|---|---|---|---|---|---|---|

| File size | 183 MB | 366 MB | 55 MB | 42 MB | 40 MB | 183 MB | 183 MB |

| Time (hard disk) | 4.4 secs | 14.3 secs | 7.4 secs | 6.1 secs | 10.8 secs | 4.2 secs | 4.3 secs |

| Time (SSD) | 2.1 secs | 0.2 secs | 4.5 secs | 4.1 secs | 9.5 secs | 1.5 secs | 1.6 secs |

As noted, Matlab’s HDF5 implementation is generally suboptimal. Better performance can be achieved by using the low-level HDF5 functions, rather than the high-level hdfwrite, hdf5read functions.

In addition to HDF5, Matlab also supports the HDF4 standard, using a separate set of built-in hdf functions. Despite their common name and origin, HDF4 and HDF5 are incompatible; use different data formats; and employ different designs, APIs and Matlab access functions. HDF4 is generally much slower than HDF5.

While save’s –v7.3 format is significantly slower than the alternatives for storing entire data elements, one specific case in which –v7.3 should indeed be considered is when we need to update or load just a small part of the data, on R2011b or newer. This could potentially save a lot of I/O, especially for large MAT files where only a small part is updated or loaded.

Great post Yair. I myself had recently discovered that -v7.3 wasn’t being my friend when compared to -v7 in one of my simulations. Large-data save operations consume 100% of a single CPU, and large imagesc() figures take *minutes* to save. I don’t need random access to .mat files, so I have moved back to -v7 to save all my data and figures.

It seems to me that the time devoted to compression could be reduced if MATLAB used multithreaded compression routines, where the compression would be CPU-bound rather than IO-bound. In Ubuntu I have started to use the pigz (parallel gzip) and pbzip2 (parallel bzip2) utilities, which are happy to utilize all of my CPUs when compressing data. Deliberate parallelization into N jobs doesn’t always yield a speedup of a factor of N, but in this case I think you could get pretty close.

Serialization functions are a great find. Thank you for sharing !

There are undocumented functions in the MEX-API to serialize/deserialize mxArray’s:

http://stackoverflow.com/a/6261884/97160

https://github.com/kyamagu/matlab-serialization/

For those not wishing to deal with MEX files there are also two undocumented MATLAB functions which are apparently nothing more than a thin wrapper around mxSerialize and mxDeserialize. They are:

The binary format seems to be 32 bit, so the largest variable I could successfully serialize and the deserialize was ~2 GiB in size (minus some overhead due to headers). But that’s probably sufficient for a lot of applications. The functions are available since MATLAB 2010b and it seems the binary format hasn’t changed since then, so they are pretty reliable/portable despite being undocumented. (I only tested using 64-bit MATLAB on OS X and Linux).

@Martin – Nice find! Thanks for reporting.

I see getByteStreamFromArray used in a single MATLAB m-file on R2010b (%matlabroot%/toolbox/matlab/scribe/private/getCopyStructureFromObject.m). It is no longer used (although it is still available) in R2013a. Likewise, getArrayFromByteStream is used on R2010b only in %matlabroot%/toolbox/matlab/scribe/private/getObjectFromCopyStructure.m, and is not used (although still available) in R2013a.

Now that’s what I call a deeply-hidden feature!

+5 if I could 🙂

While the HDF5-based v7.3 file format may have some disadvantages, it has one huge advantage for me: It can save everything in my workspace as long as there is enough disk space. You start to appreciate this once you spend hours/days to compute a multi-gigabyte result to later find out that it wasn’t saved because it was too big for the v7 file format and MATLAB stupidly does not consider this to be a fatal condition (I would have expected to get an exception/error) and instead happily continues. That’s why v7.3 is my default and I can happily wait a few more seconds/minutes on save.

@Martin – naturally, but what I tried to show in the article is that you can have the best of both worlds, by using savefast and serialization.

Saving Matlab O-O objects in the -v7.3 format has a bug in R2012a and R2012b (supposedly fixed in R2013a) which causes a memory leak when the objects are loaded back into Matlab. Not only do the normal issues with a memory leak apply, but there is also an issue that when you “clear” that variable, Matlab knows that all objects of that class have not been cleared, and will not pick up on modifications to the class’s definition, instead issuing the “Warning: Objects of ‘MyClass’ class exist” warning that everyone who does any O-O programming in Matlab is very familiar with.

This issue is an official bug report on the Mathworks website: http://www.mathworks.com/support/bugreports/857319

@Greg – thanks. Yet another reason to avoid -v7.3 …

[…] If you need to optimizing read/write speed when dealing with MAT/HDF5 files, I encourage you to read Yair’s excellent post on that topic. […]

Are you sure Matlab doesn’t compress structures/cells saved in v7.3?

I have exactly the opposite problem that it is not able to disable compression, which in v7.3 provides almost no space benefit for my data at the cost of extremely long save and load times.

When I save structures/cell arrays compression is still used. I think compression kicks in for any numeric object which is greater than 10000 bytes (so if you have large structure or cell contents I think they will still be compressed).

The serialization trick is really interesting, thanks!

[…] you need to optimizing read/write speed when dealing with MAT/HDF5 files, I encourage you to read Yair’s excellent post on that […]

[…] Last year I wrote an article on improving the performance of the save function. The article discussed various ways by which we can store Matlab data on disk. […]

[…] to be too slow for our specific needs. We could perhaps improve it a bit with some fancy tricks for save or fwrite. But let’s take a different approach today, using multi-threading:Using Java […]

What about the load function? Inside a function, I found that using assignin(‘base’, variables) is faster than using evalin(‘base’, load .mat containing the variables). I thought loading the variables from a .mat file would be faster than using the assignin command. Would really like to see the same kind of topic but on the load command.

Hello,

I’ve just tried to save a figure (around 2e4 double data points) with -v7.3 in R2014a, and it resulted in 100Mb file size instead of 1.4Mb with default -v7. A new bug maybe?

For me, the biggest advantage of savefast is that by saving uncompressed, a specific portion of an array can be loaded MUCH faster when using the matfile function. There seems to be some decompression overhead that is a function of the array size regardless of how little data you are asking for. For example, with a 250Mb file just using the save function, it take a minimum of 2 seconds to extract data, even if I am only loading 10k elements. If the file is created by savefast it only takes .02 seconds to load 10k elements. This will be a huge improvement for my software.

Is it possible to save matfiles without creating the initial variable at the beginning?

I am continually adding output data to my matfile block by block (I.e continually expanding its dimensions). I want to speed this up and I considered preallocating the space within the matfile (as you do with regular arrays before a loop).

However my initial variable will be quite large (hence why I am block processing), and I don’t want to risk the possibility of a memory error, so ideally I’d like To create the Matfile without Saving the variable to matlab memory as you did with your A and B variables.

Is there a way?

Not directly, but you could try using the low-level HDF5 functions for this.